Process Mining

Over the last two decades, process mining emerged as a new scientific discipline on the interface between process models and event data. The research we started in the late 1990-ties in Eindhoven, led to a wave of process mining tools, including Celonis, Disco, Apromore, UiPath Process Mining (ProcessGold), SAP Signavio Process Intelligence, ARIS Process Mining (Software AG), Abbyy Timeline, Appian Process Mining (LanaLabs), IBM Process Mining (myInvenio), Minit (Microsoft), Mehrwerk, QPR Process Mining, Skan, UltimateSuite, Mindzie, Nintex, PuzzleData ProDiscovery, Kofax, LiveJourney, iGrafx, Logpickr, Decisions Process Mining, Synesa, Everflow (Pega), Process Discovery Automation Anywhere (FortressIQ), BusinessOptix, Datricks, PAFnow (Celonis), DCR Process Mining, Oniq, Noreja, Mavim Process Mining, Process Science, Promease, Scout Process Discovery, Workfellow, Stereologic, UpFlux, Worksoft Process Mining, , etc. and open source tools like ProM, ProM Lite, RapidProM, OCPM, OCpi, Cortado, PM4knime, bupaR, etc. Note that many of the ideas first implemented in ProM were later adapted by other tools. On the one hand, conventional Business Process Management (BPM) and Workflow Management (WfM) approaches and tools are mostly model-driven with little consideration for event data. On the other hand, Data Mining (DM), Business Intelligence (BI), and Machine Learning (ML) focus on data without considering end-to-end process models. Process mining aims to bridge the gap between BPM and WfM on the one hand and DM, BI, and ML on the other hand. Here, the challenge is to turn torrents of event data ("Big Data") into valuable insights related to process performance and compliance. Fortunately, process mining results can be used to identify and understand bottlenecks, inefficiencies, deviations, and risks.

We are eager to collaborate with external parties on topics related to process mining. It is a very generic technology that can be applied in many fields (production, logistics, finance, auditing, healthcare, energy, e-learning, e-government, etc.). However, we get flooded by people that have data and processes, but have no clue about process mining. Before contacting us, please study the slides on how to get started. (Here is the PowerPoint file.) Next to the slides there are many ways to get more information about process mining, e.g., via the process mining website, by reading the book Process Mining: Data Science in Action and by taking take the joint RWTH/Celonis course Process Mining: From Theory to Execution. To go deeper, one can also take the Coursera Process Mining Course to get a comprehensive overview and a deeper understanding of the concepts and techniques. See also my publications page for loads of information.

About the Origins of Process Mining

I started to work on process mining in the late 1990-ties. Until 1998, I strongly believed that workflow management systems would be used everywhere. The dream was to simply model processes (e.g., using Petri net or predecessors of BPMN) and automatically generate a working information system supporting these processes. At the time, many workflow management systems were already available (hundreds actually). However, around 1998, I noted that workflow technology suffered from the problem that process models made by hand had little to do with the real processes. This explained why many organizations bought the technology but never used it in production. Therefore, I started to focus on learning process models from event data. In 1998, I was developing the first algorithms to learn Petri nets from example traces. Discovering a sequential process (e.g., a DFG or Markov chain) is easy. However, it is very difficult to discover a process with concurrency, especially if one only has example traces. None of the then existing approaches were able to handle this setting. This led to the development of the Process Design by Discovery: Harvesting Workflow Knowledge from the Ad-hoc Executions project, which first coined the term process mining. The project was approved in 1999 and funded by BETA. At the start there was a tight connection with workflow management, i.e., I saw process mining as a way to overcome the limitations of workflow management systems. Sometimes, we used the term workflow mining next to the term process mining. Later, I realized that process mining also has value in settings where one would never consider using workflow systems. Interestingly, in recent years, the connection between process mining and automation witnessed a revival with the uptake of digital twins, execution management, action-oriented process mining, and RPA.

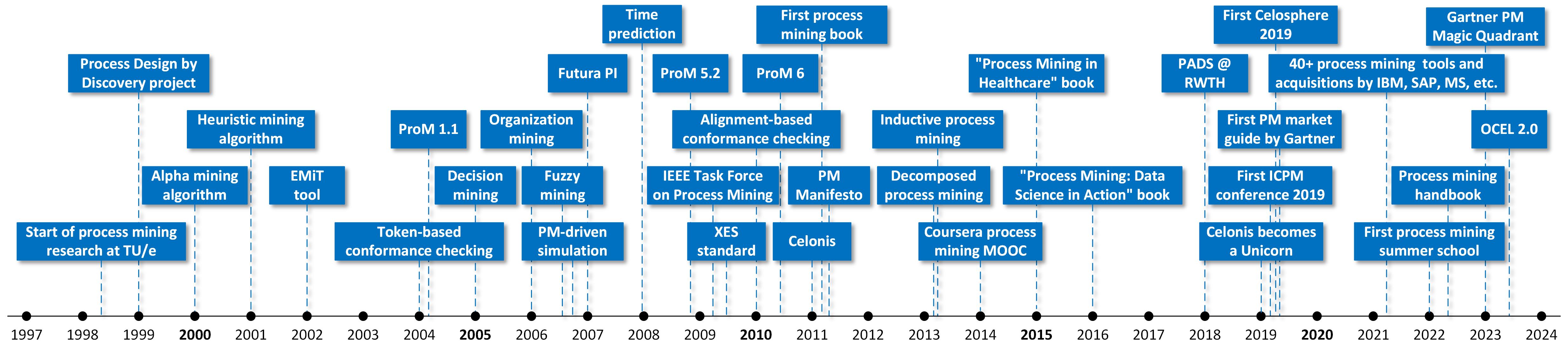

The timeline shows the development of process mining since its inception in the late 1990-ties until 2024. What is interesting to note is that many of the original questions posed over two decades ago are still valid. What is the actual process, and how does it differ from the assumed or desired process? What are the main bottlenecks, and why are they there? What are the main compliance problems, and what do they have in common? Can we predict performance and conformance problems? What happens if we make this intervention? One could argue that the period 2005-2007 was the golden age of process mining where, i.e., many things were invented such as conformance checking, fuzzy mining with token animation, decision mining, organizational mining, predictive process mining, etc. Although process-mining techniques answering all of the above questions have been around for quite some time, the underlying problems are notoriously hard and still not fully solved.

Business Process Management (BPM)

Business Process Management (BPM) is the discipline that combines knowledge from information technology and knowledge from management sciences and applies this to operational business processes. It has received considerable attention in recent years due to its potential for significantly increasing productivity and saving costs. Moreover, today there is an abundance of BPM systems. These systems are generic software systems that are driven by explicit process designs to enact and manage operational business processes.

BPM can be seen as an extension of Workflow Management (WFM). WFM primarily focuses on the automation of business processes, whereas BPM has a broader scope: from process automation and process analysis to operations management and the organization of work. On the one hand, BPM aims to improve operational business processes, possibly without the use of new technologies. For example, by modeling a business process and analyzing it using simulation, management may get ideas on how to reduce costs while improving service levels. On the other hand, BPM is often associated with software to manage, control, and support operational processes. This was the initial focus of WFM. However, traditional WFM technology aimed at the automation of business processes in a rather mechanistic manner without much attention for human factors and management support.

See Business Process Management: A Comprehensive Survey. ISRN Software Engineering, pages 1-37, 2013. doi:10.1155/2013/507984.

Petri Nets and Other Models of Concurrency

Petri nets are the oldest and best investigated process modeling language allowing for concurrency. Although the graphical notation is intuitive and simple, Petri nets are executable and many analysis techniques can be used to analyze them.

Petri nets have a strong theoretical basis. Moreover, a wide range of powerful analysis techniques and tools exists. Petri nets take concurrency as a starting point rather than an afterthought. Obviously, this succinct model has problems capturing data-related and time-related aspects. Therefore, various types of high-level Petri nets have been proposed. Colored Petri nets (CPNs) are the most widely used Petri-net based formalism that can deal with data-related and time-related aspects. Tokens in a CPN carry a data value and have a timestamp. The data value, often referred to as color, describes the properties of the object modeled by the token. The timestamp indicates the earliest time at which the token may be consumed. Transitions can assign a delay to produced tokens. This way waiting and service times can be modeled. A CPN may be hierarchical, i.e., transitions can be decomposed into subprocesses. This way large models can be structured. CPN Tools is a toolset providing support for the modeling and analysis of CPNs (www.cpntools.org).

See Petri Nets World and the book W.M.P. van der Aalst and C. Stahl. Modeling Business Processes: A Petri Net Oriented Approach. MIT press, Cambridge, MA, 2011 for more on Petri nets.

Workflow Patterns and WFM Systems

The Workflow Patterns initiative is a joint effort of Eindhoven University of Technology and Queensland University of Technology which started in 1999. The aim of this initiative is to provide a conceptual basis for process technology. In particular, the research provides a thorough examination of the various perspectives (control flow, data, resource, and exception handling) that need to be supported by a workflow language or a business process modelling language. The results can be used for examining the suitability of a particular process language or workflow system for a particular project, assessing relative strengths and weaknesses of various approaches to process specification, implementing certain business requirements in a particular process-aware information system, and as a basis for language and tool development.

The web site www.workflowpatterns.com provides detailed descriptions of patterns for the various perspectives relevant for process-aware information systems: control-flow, data, resource, and exception handling. In addition you will find detailed evaluations of various process languages, (proposed) standards for web service compositions, and workflow systems in terms of this patterns.

Based on a rigorous analysis of existing workflow management systems and workflow languages, we have developed the workflow language: YAWL (Yet Another Workflow Language). YAWL is a powerful workflow language based on the workflow patterns and Petri nets that is supported by an open source environment developed in part in collaboration with industry.

Simulation

Simulation provides a flexible approach to analyzing business processes. Through simulation experiments various what if questions can be answered and redesign alternatives can be compared with respect to key performance indicators. Increasingly, simulation techniques will need to incorporate actual event data. Moreover, there will be a shift from off-line analysis at design time to on-line analysis at run-time. Hence, the link between process mining and simulation is obvious.

See my Business Process Simulation Survival Guide for more information on modern forms of simulation and the pitfalls people typically make when using simulation.

Conducting Research: Beauty Is Our Business

Working as an academic and having the freedom to pick your own research challenges, is the best job one can imagine. However, writing papers is not easy and the impact and quality of research are difficult to measure.

- How to Write Beautiful Process-and-Data-Science Papers? After 25 years of PhD supervision, I noted typical recurring problems that make papers look sloppy, difficult to read, and incoherent. The goal is not to write a paper for the sake of writing a paper, but to convey a valuable message that is clear and precise. The goal is to write papers that have an impact and are still understandable a couple of decades later. Our mission should be to create papers of high quality that people want to read and that can stand the test of time. We use Dijkstra's adagium Beauty Is Our Business to stress the importance of simplicity, correctness, and cleanness. I wrote this tutorial-style paper to help people improve their writing skills and also reflect on what they write from the viewpoint of the reader.

- Yet Another View on Citation Scores How to evaluate scientific research? is a controversial topic. In this blog post, I provide some of my personal views. Whether we like it or not, we need to evaluate the productivity and impact of researchers. In some universities, it has even become politically incorrect to talk about published papers and the number of citations. This creates the risk that evaluations and selections become highly subjective, e.g., based on taste, personal preferences, and criteria not known to the individuals evaluated. My personal opinion is that we cannot avoid using objective data-driven approaches to evaluate research productivity and impact. Therefore, I discuss the challenges related to citation scores. For example, comparing a Physics paper with thousands of authors published in a US-based journal versus a computer science paper with two authors presented at a highly competitive conference in Europe. I was triggered to write this blog when I came across the datasets provided by John Ioannidis and his colleagues. They suggest using a normalized citation score and provide data on 200,000 top researchers across all scientific disciplines. The goal is to address the challenges mentioned. I include some of the findings by using the data and provide several pointers. I expect that more people are not aware of these data and may find them useful and interesting. Enjoy reading!

Books

Below are pointers to some of the 35+ books, I have (co-)authored/edited.